Rancher - Deploying A Load Balancer

If you're like me then you have a lot of services that are web-apps that run on port 80. It would be lame if we had to add a host to Rancher for every web application so that we had an IP on port 80 free to give to the app. Instead we can have docker assign random host ports to our containers and use a load balancer to route the traffic as necessary. This also gives us the added benefit of being able to handle degradation of infrastructure gracefully as we will see later.

For this tutorial, we will be using Rancher's default "cattle" environment and its built-in HAProxy load balancing service.

Requirements

For this tutorial, I am using 3 docker hosts attached to my Rancher server, but you can get by with just 2. Each host needs a minimal amount of RAM.

Related Posts

- Rancher tutorial map - for all Rancher related tutorials on this blog.

Steps

First we need to deploy our web application. For this tutorial, I am going to use the tutum/hello-world example image which will display a web page like below:

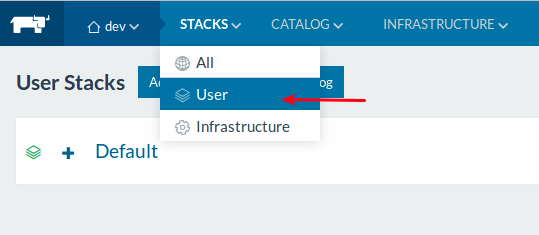

Click on STACKS, and then User.

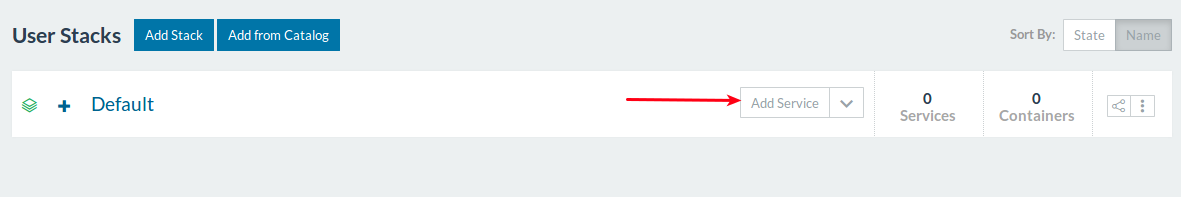

Then click the Add Service button (making sure not to click the down arrow).

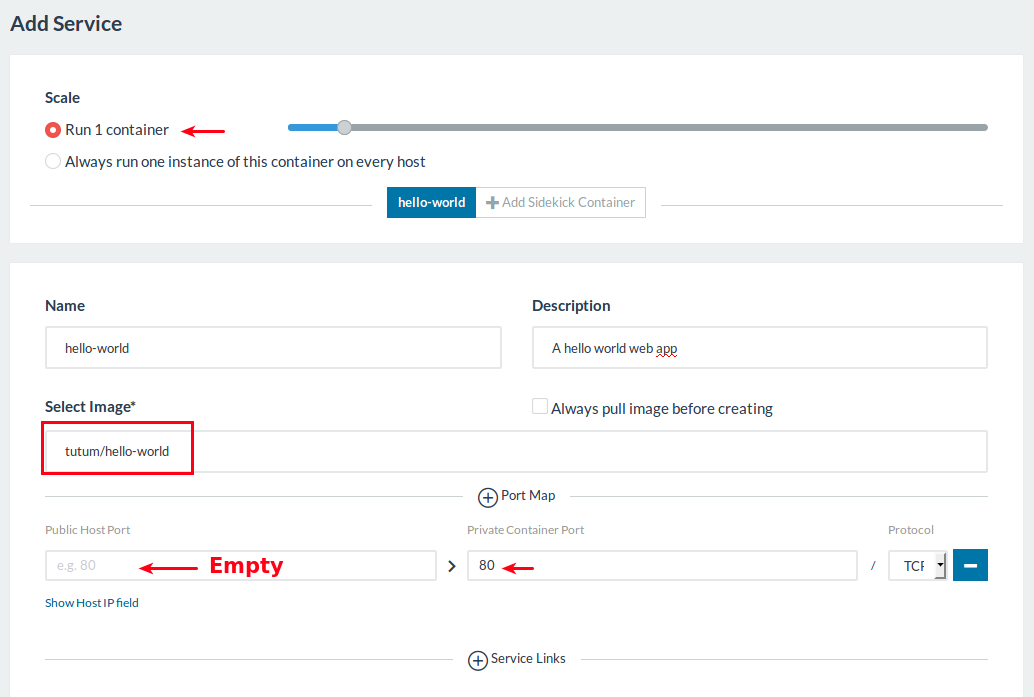

Fill in the settings as shown below. I have highlighted the important bits.

I deliberately chose to only run 1 container so that there is definitely a host that is not running the service. This helps demonstrate the benefits of the load balancer later.

I deliberately kept the public host port empty so that one will be randomly assigned to it. The load balancer will automatically take care of handling this factor later. However, we do need to specify that this will map to the containers port 80.

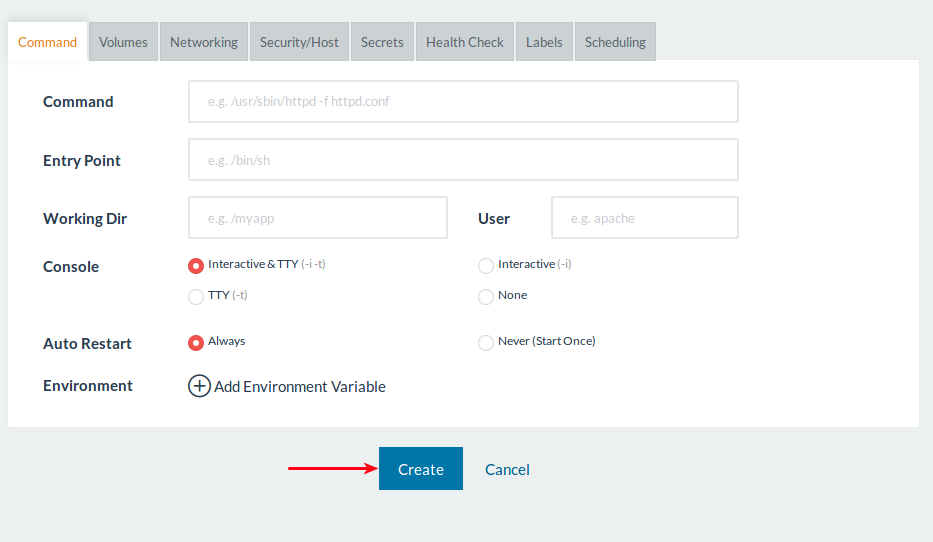

After having filled in those settings, scroll down and click Create. Don't bother changing any of the settings in the bottom half as we don't need those for now.

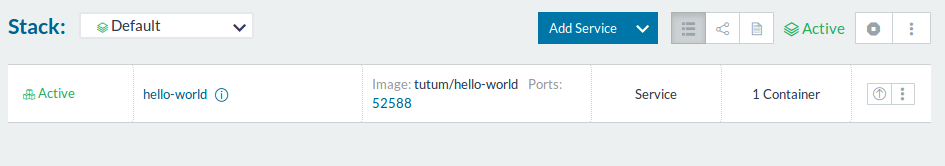

You should now see your newly deployed service. In my case, it has been given a port of 52588.

Now lets deploy a load balancer to route traffic to that service. We will configure our load balancer so that no matter which node in our cluster gets hit on port 80 with the request, it will get forwarded to that service to handle, whichever host it may be on at the time.

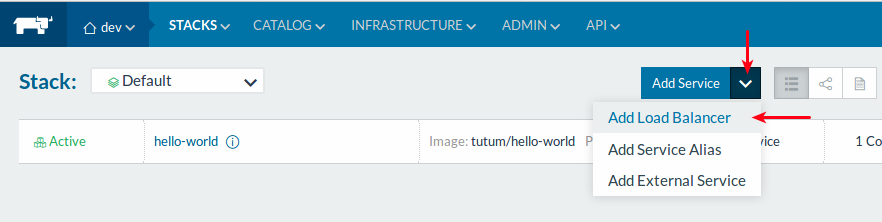

Click the down-arrow (chevron) button beside Add Service option. Then click the Add Load Balancer option.

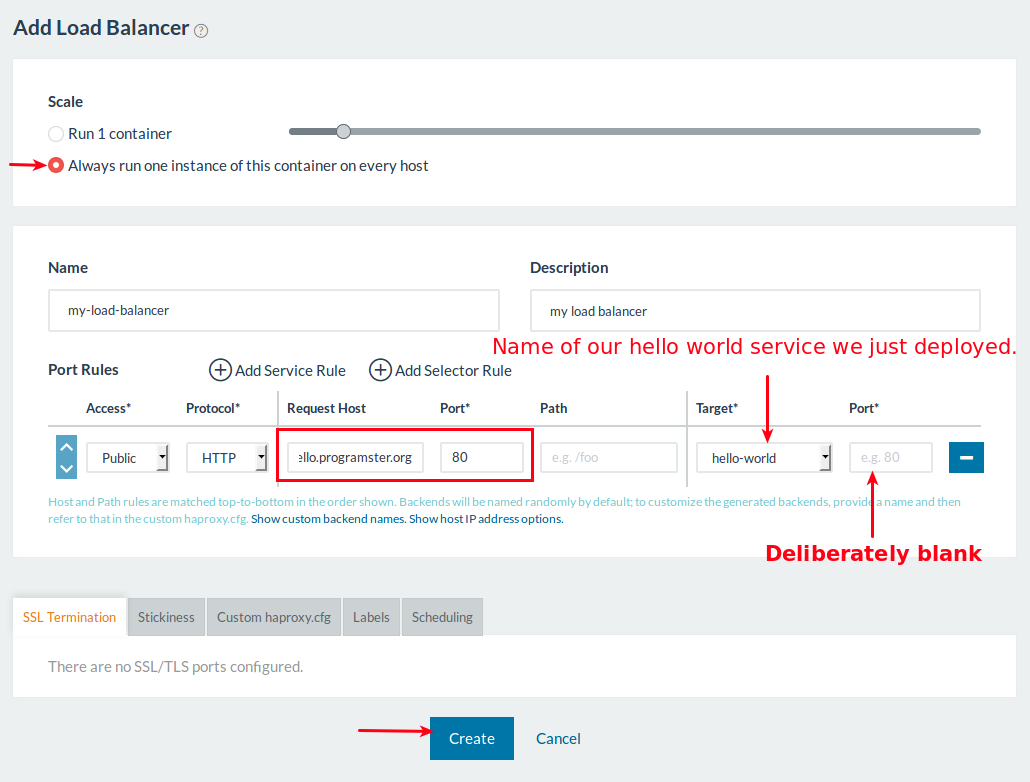

Fill in the details for the load balancer as shown below and click Create.

By choosing to run the load balancer on every host, we can have our DNS server to point to every host in our cluster, spreading the load, and more gracefully handling a host going down. Alternatively, run just 1 container on one host and have your DNS server point only there. However, you will need to make sure that host never goes down, and it will need to be powerful enough to handle all incoming traffic.

By having the target port blank, the load balancer will automagically use the random port assigned to that service.

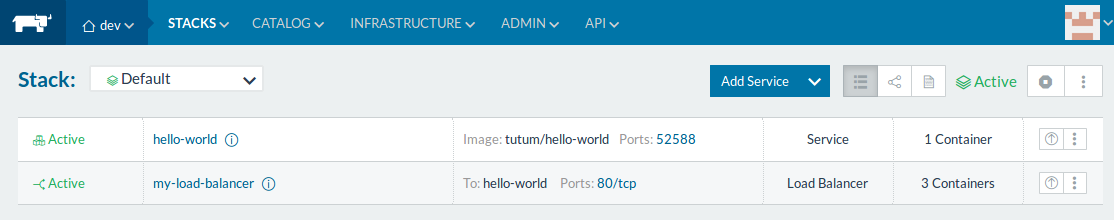

You will now see your hello world and load balancer services running.

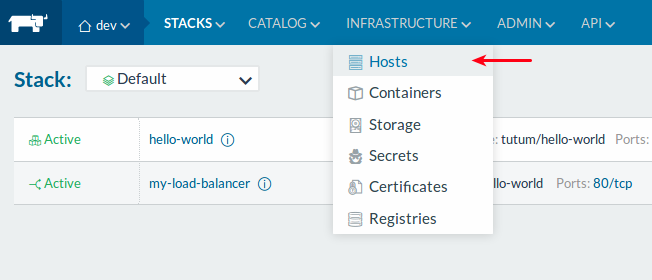

Go to the hosts page by clicking Infrastructure and then Hosts.

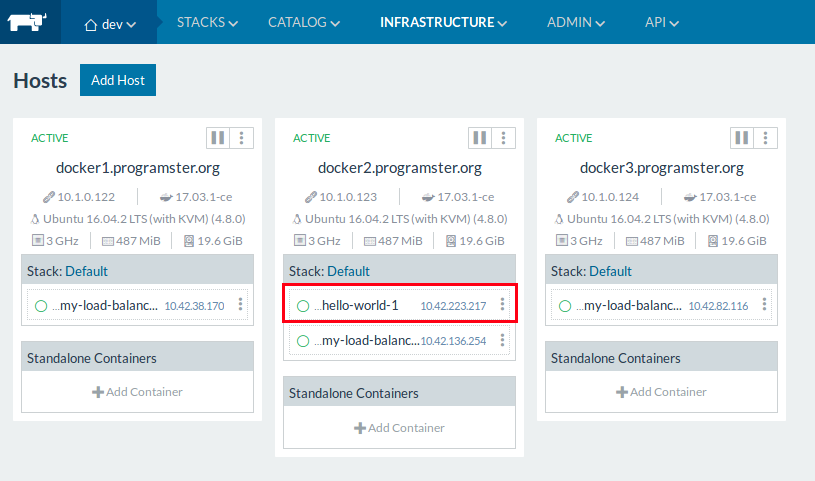

You should now be able to see which host our hello-world web application is deployed on, and see that there is a load balancer on every host.

Testing

Now to test that everything is working, update your DNS server (or just your computer's /etc/hosts file) to point the url you plugged into the load balancer, to each of your host's IPs one at a time. You should see that the hell-world output each time!

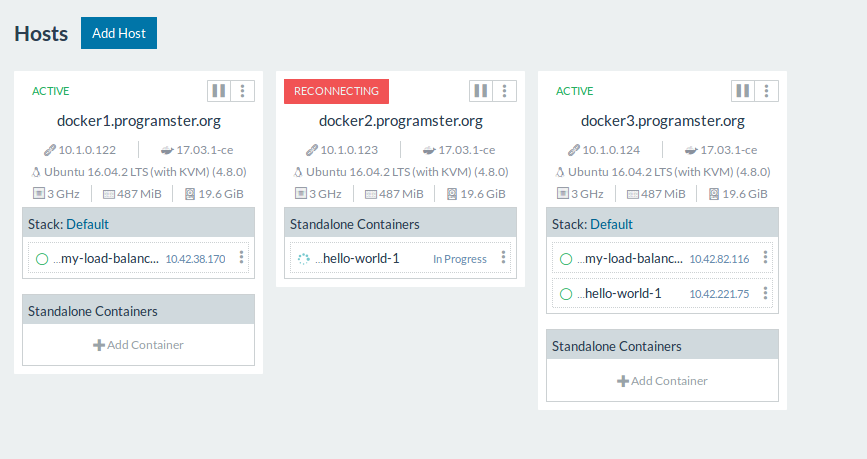

Taking things further, we can test the durability of our infrastructure by connecting over SSH to the host of our hello-world container and shutting it down. At first Rancher will wait for the host to come back, but eventually it will re-deploy the container to another host as shown below:

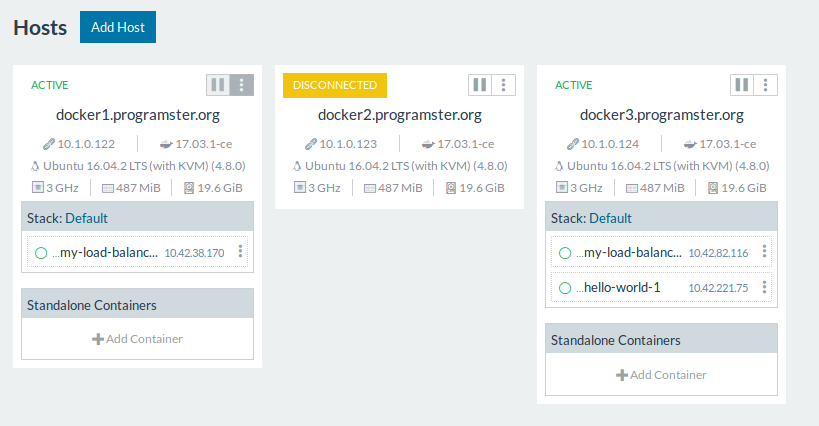

After a bit more time, the host will show up as disconnected at which point you know you need to take action to try and bring the host back up, or click the menu option to Deactivate and then Delete the host.

First published: 16th August 2018